November 2014, Vol. 241, No. 11

Features

Building A Risk Model For Midstream Shale Gas Assets

Access Midstream has identified a need for a broad-spectrum risk model for its pipeline assets. This business need extends beyond the requirements mandated by the federal Department of Transportation (DOT) and state regulatory agencies.

Regardless of regulatory status, all pipeline assets carry some risk, and even unregulated pipelines can carry a level of risk that may require mitigation for prudent operation. In addition to providing a tool to aid in protecting Access’ assets, risk management drives health and environmental safety decisions, protecting the company’s acceptance in the communities it operates in. In other words, risk-based decision-making can enhance the company’s ability to maintain its social license to operate.

This article outlines the business needs and approach with regard to risk assessment on its pipeline assets. Access is developing an in-house risk solution that works for midstream gas and oil gathering, while still remaining flexible enough to provide the same value to its regulated transmission assets.

The focus has been on designing a model for quantitative risk assessment that employs existing. The model must distinguish between high- and low-risk lines, but should also generate almost real-time, actionable results that can be evaluated along with established risk protocols. With assets expanding quickly, the assessment tool must use automated processes that are scalable with the company’s growth.

Shale Plays

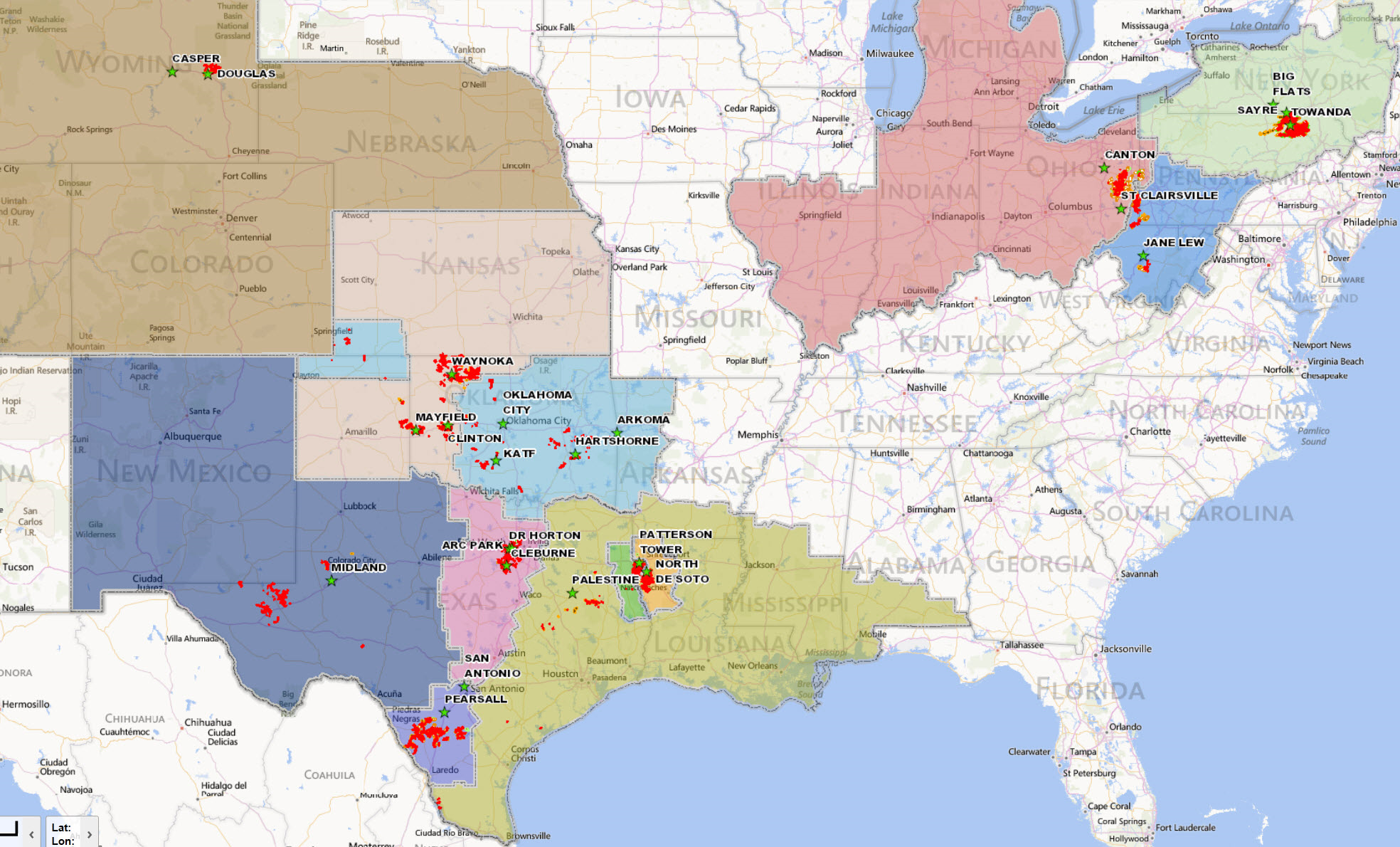

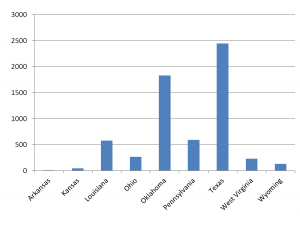

To develop a solution addressing the needs of a gathering company, traits that define the criteria for an applicable risk model must be defined. Access’ assets are primarily in shale gas plays, gathering product from single and multiple wellhead pads. The company operates in several states, including Arkansas, Kansas, Louisiana, Ohio, Oklahoma, Pennsylvania, Texas, West Virginia and Wyoming. (Figure 1).

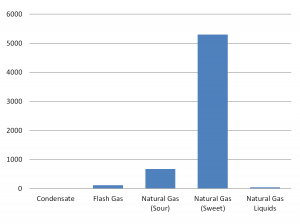

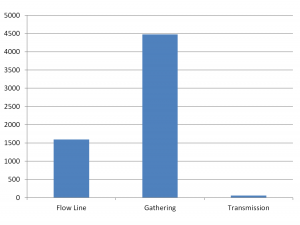

Some businesses may devote 10% or more of annual operating expenses and capital to carrying one product from Point A to Point B on a single pipeline. Others devote entire budgets to thousands of pipelines, but do so in nearly identical conditions with nearly identical products. As a diversified midstream company, Access must have a model that handles different commodities, materials, segment types and operating environments. Figures 2 through 5 illustrate the diversity of materials, products and locations that must be considered.

Figures 2-5: Miles of pipe by material, commodity, segment type and state.

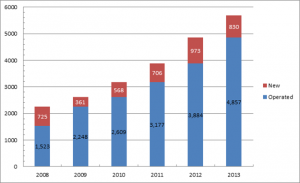

Access pipeline assets include nearly 6,000 operationally unique pipeline assets and more than 6,000 miles of pipe. The total pipeline mileage increases by an average of 747 miles of pipe a year through construction and acquisitions, so the risk model must be scalable.

Figure 6: Access’ miles of pipe growth, 2008-2013

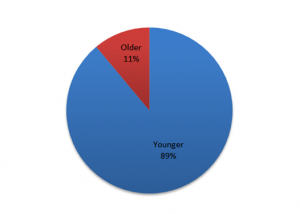

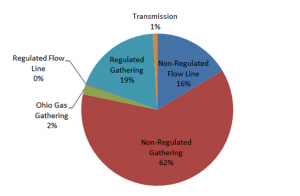

It is unusual for an unregulated operator, such as Access, to include integrity inspection data for many of its pipelines. Figure 8 illustrates the relative proportions of Access’ regulated and unregulated assets. Additionally, Access’ pipelines are relatively young (as shown in Figure 7), compared to other systems that have the benefit of decades of operations and maintenance data. For the model to estimate failure rates from a variety of threats, it must rely primarily on other widely available data.

Rates such as mils per year (MPY) of internal corrosion, line strikes per mile-year on rights-of-way (ROW) and coating failures per square foot are not always known, as many assets are new. For most cases, these values are not available and must be inferred from other sources. For example, internal corrosion rates may be based on bacteria samples, product flow rates, partial pressures of corrosive gases and the presence of chemical treatment.

Figure 7: Percentage by mile of pipe (less than 15 years old).

Figure 8: Regulatory status of pipe, percentage by mile.

Discussion Of Risk Model

Many criteria shape the functions of a valuable risk model; there are guidelines for prioritizing assessment in an integrity management plan that ensure protection for the public from serious environmental and safety consequences. DOT provides requirements in the Code of Federal Regulations (CFR), Title 49, Parts 192 and 195. Part 192 references the American Society of Mechanical Engineers (ASME) standard B31.8S, Section 5, which provides useful detail on traditional threats that should be included. American Petroleum Institute (API) Recommended Practice (RP) 1160 provides some risk management guidelines for hazardous liquid pipelines.

The scope of risk management prescribed in these documents is mostly directed to categories of pipelines of greatest concern such as transmission lines and lines in high-consequence areas (HCAs).

However, these categories do not fully capture additional high-risk elements that would be applicable to an enterprise risk management strategy for a midstream operator, such as the cost of down time for repairs, inability to consistently meet business obligations, value of lost commodity and adjacent property and structure damage costs. Therefore, it is important for a risk model to extend beyond minimum considerations. Many of the assessment and inspection techniques called for by the RPs and standards are not well-suited for all of a gathering company’s assets.

Access plots all of its risks to a standard heat map (risk matrix), with the chance of occurrence on one axis and magnitude of consequence on the other. Plotting qualitatively assessed threats provides a high-level picture and ensures threats identified are handled at the appropriate level.

To do so, a relationship must be established between the qualitative matrix and the quantitative estimates produced by the risk model. The description for each qualitative level of probability and consequence is correlated to a quantitative value, and the model is then calibrated to produce realistic failure rates and consequence values.

The risk model is designed to create a decision-making tool for all pipeline assets. The model must primarily rely on data that is gatherable on nearly all assets while being supplemented by more detailed data. Using companywide data sets allows for a single standard for review and shows how assets in one part of the country compare to assets in another. Some data sets include:

• Basic pipeline attributes, stored in a geodatabase

• Data created through automated, spatial processing

• Public spatial data (environmental, utilities, terrain, etc.)

• Operations and maintenance (O&M) data

• Environment, health and safety (EHS) data

• Construction data

• Subject matter expert (SME) data

Inline Inspection (ILI) data is incorporated, but the percentage of pipe miles and operating areas represented by the data are limited (Figures 9 and 10). Consequently, the expected internal and external corrosion rates for Access’ assets must be inferred from other data sets such as corrosion coupon data.

The risk model must estimate exposure to certain threats through indicators. Internal corrosion issues may be inferred from metered product stream content, sample data and flow characteristics. External corrosion may be drawn from publically available soils data, electrical utility maps and foreign pipeline data.

Figure 9: Percentage per mile of pipe with ILI data available.

Figure 10: ILI Coverage in Miles for Each Field Office.

The data sets may show changes on a daily basis as new pipelines are constructed and commissioned, record reviews are completed and O&M are documented. Access built a model that refreshes data and calculation at least monthly. This is essential, allowing an almost real-time view of the company’s pipeline asset risk.

In general, the data sets grow larger in both history of data collected and miles of assets covered, emphasizing the need for a risk model grows with the company without appreciable changes in risk assessment workload or performance. Access has accomplished this by automating data-gathering processes, establishing the model in a data base structure and building equations into routines that are called on by the data base, rather than building the equations into the data itself.

Access is developing its model to produce results that correlate to predicted failure rates from various threats and weaknesses, including corrosion mechanisms, cracking, construction and manufacturing defects, failures of permanently installed equipment, incorrect operations and other man-made and natural outside forces. The interaction of threats is shown by aggregation of the failure rates from those threats.

There are four primary types of operations failure consequences that Access assesses. For the purposes of this risk model, a failure is defined as the loss of containment of the commodity being transferred through a leak or rupture. The model includes an evaluation of health and safety, environmental, and public and direct financial affects from pipe failure.

Since this model will be used for wide-encompassing asset risk assessment, it is important that the model estimates the most likely type of consequence. The model makes a distinction of whether rupture or leak is more likely and estimates the probability of ignition.

One of the ongoing initiatives tied to this model’s development is the push for data quality improvement. A complete data verification effort on all pipelines would be cost-prohibitive. For the majority of pipelines with low associated risk, such an effort would not likely identify any conditions requiring action. Access has created an additional module to its risk algorithm that prioritizes data-gathering needs. These priorities are based on the operational risk that each asset carries as well as the difficulty of improving the data.

Access has created an in-house risk model. All formulas, data, standards, personnel and hardware for risk assessment are housed at the company’s corporate headquarters. Changes to the data in the model may be integrated within hours, and formulas in the model may be altered in less than a day, using a management of change (MOC) process.

Flexibility is important as new data become available or research identifies ways to increase the accuracy of the assessment. Other sources for changes to the model include the numerous settings for validation of the model’s results. Access’ model is being continuously evaluated through quarterly results reviews with field staff, annual internal reviews by the asset integrity department and third-party evaluations by risk-modeling experts.

Risk-model performance is also evaluated against more ideal, less inferred metrics such as integrity inspection data. Early risk assessment results should be used to prioritize initial selection of which pipelines to inspect. Once sufficient data is gathered to draw conclusions about the accuracy of inferences the model makes from other data, the model should be changed if those inferences are incorrect.

Tornado plots are a valuable tool for adjusting the effects that a single attribute may have on an asset’s risk score. A tornado plot can identify which data inputs have the greatest and least risk effect in the model. Inspection data can be used to validate if certain inputs record the level of effect being modeled and adjustments should be made as needed.

Risk Model Challenges

It is uncommon for assets like Access’ to have available integrity assessment data, and the company’s risk model has been designed to not rely primarily on assessment data. Inline inspection and direct assessment can provide accurate estimates for time to failure and likely failure mode on pipelines. The equations for making such estimates are fairly well understood.

In these cases, a risk model must rely on available leading indicators. Some risk models are built around an ideal set of available data for each section of pipe to be analyzed. Access’ approach has been to use existing data to develop equations to estimate failure potential and consequences.

In addition to a lack of assessment records, there are other data challenges. A single pipeline attribute may be derived from multiple data sources of varying quality. For this reason, it is important to understand data sources and establish a “data hierarchy” for determining a pipe section’s attributes.

Data hierarchy is a prioritization tool for automatically selecting the most reliable information as the basis for a pipe segment’s risk assessment; it must be considered for each data variable prior to incorporation in the risk model. Wall thickness is an example of an attribute that could come from multiple sources. Sources may include direct measurements from ILI or alignment sheets, electronic data from a geo-database, or assumptions based on construction standards or adjacent pipelines.

Another example is longitudinal seam type which may come from construction records, field verification or assumptions based on a combination of in-service date, diameter and pipe material. During risk-model development, SMEs were consulted to prioritize data sources for variables that can be pulled from multiple locations. SMEs were also consulted to develop rules for making assumptions when data is unavailable.

Another challenge with developing a universal model for a company’s entire pipeline asset base is the need for flexibility. Some pipeline threat behaviors vary considerably by commodity such as internal corrosion and fatigue stress on material. In the internal corrosion example, quarterly pigging may result in a different mitigation effectiveness rating on natural gas pipelines than on crude oil pipelines. Water accumulation may be more likely in natural gas pipelines than in crude oil pipelines due to increased difficulty in achieving adequate sweeping.

Weaknesses such as construction and manufacturing defects that may be considered time-stable could be treated as weakening over time if the commodity carried is non-compressible. For threats in which the behavior varied drastically between commodities, a separate set of calculations was established for each commodity category.

Diverse pipeline operating conditions help drive flexibility in the risk model. Access’ pipelines generally carry unprocessed commodity, so the product pressure, temperature, gas composition and operating patterns vary greatly between shale plays and between gathering systems in one shale play.

Sales-quality gas generally has a well-defined composition and standard conditions for transport. However, some portions of a midstream company’s product stream may carry basic sediment, water or other non-commodity elements; operating conditions may be steady-state or transient. These are all factors that must be accounted for in estimating failure rates and consequences. Solutions for variability over time include using 30-day averages, time-weighted averages and maximum values over a time frame. Differences in product stream are accounted for by looking at the potential for accumulation in the line and making assumptions based on the segment type or use.

If the risk model is used for companywide decision-making and asset comparison, the results must be produced in a consistent, trusted manner. A robust MOC process is a crucial partner to flexibility in creating a successful model. While MOC requirements typically reduce the speed of implementing changes and incorporating new data, there are still advantages to using formal MOCs to change the model.

An MOC process will ensure changes are reviewed at the appropriate level prior to implementation. It may be determined that certain changes should be reviewed by a company SME or outside agency. MOC records provide thorough documentation that shows not only the review chain, but also the complete implementation and subsequent notifications. MOC enables version control and may also save time when reverting back to previous forms of the risk model. Access’ Asset Integrity Department has developed an MOC form and standard with a comprehensive, electronic library of all past changes to its standards, forms and procedures.

Another challenge in developing a risk model is striking a balance between complexity and simplicity. The interaction of factors influencing the probability of failure from a given threat is extremely complex. It stands to reason that such interaction would also be difficult to model with a mere set of equations and tables. One approach to account for this would be to gather all of the data inputs along with time-to-failure rates on pipelines with known events and associated dollars spent.

Equations for time to failure and associated cost could be derived from linear regression analysis or some other high-order polynomial function, curve-fitting exercise. However, it is unlikely the resulting equation would be completely decipherable. It may be difficult to explain the validity of such equations in an audit. It is important to develop equations in the risk model that show a clear, linear path of steps from development of a time to failure, to likely failure mode and finally the consequences associated with that failure mode.

The equations must be simple enough that they can be understood by several individuals in addition to the designers of the model; other users must be able to make changes to the model and use it to produce consistent results. Access is developing its risk calculations to be broken into small-step equations. Intermediate values between inputs and final probability and consequence rates are shown with real, tangible units next to them, such as excavations per mile-year, coating failures per square foot, structure dollars per square foot, etc.

A final challenge in quantitative risk modeling is incorporating it into the company’s enterprise risk management program. Risk assessment provides value in potential incident reduction through better asset management, but this value is difficult to measure. It also naturally has a degree of utility in its ability to drive quality assurance and improvements in company data. However, its greatest value is in driving efficiency in spending and risk-based justification for decisions. The predicted failure rates and consequences must be comparable to the company’s risk matrix metric.

Lessons Learned

One of the key elements of an effective risk model is an understanding of the equations being used. This may simply be achieved through good communications with the model developer in order to cultivate a level of familiarity and awareness with how each equation functions to calculate results.

If the company’s risk model is developed in-house, understanding is accomplished through careful sourcing of all equations and tables. There should be an explanation tied to each equation. Examples include citing a well-understood flow-dynamics phenomenon for leak calculations, a relationship between cathodic protection readings and external mitigation effectiveness established with empirical data or a percent effectiveness of oxygen scavenger treatment, based on a discussion with a company SME.

The model must balance the complexity of actual physical interactions that lead to asset risk with simplicity in its equations. Continuous validation of the model from many sources can enhance the confidence in risk assessment results. Other detractors from acceptance of results can include inaccurate data, false assumptions, incorrect application of data and disproportionate emphasis on the wrong data variables.

For each data variable and data variable source, there should be careful consideration of the value added to the risk model. Tornado plots are an excellent method for validating the marginal return of adding a variable or improving on an existing variable in the risk model. Tornado plots make it evident when certain variables have a high or low impact on risk rating.

A higher impact on risk means a greater importance of inputting an accurate value for that data variable. This also indicates greater value in efforts to improve data quality for that variable. The same is true for the converse.

When designing an in-house risk model, the model should not be developed entirely in a vacuum. Access has invited criticism from SMEs within the company. It has also solicited detailed reviews from several well-known risk management consultants. Reviews of the model will continue on an annual basis as part of the company’s ongoing process improvement for risk management.

Conclusions

Risk assessment programs are not a built-in, mandatory cost of doing business for the midstream sector. Any additional expenditure on risk and integrity management directly affects the profitability of operation. However, risk is not simply associated with a limited selection of asset types, environmental categories or commodities. Therefore, it is imperative for Access to have a tool to be selective of how risk and integrity management are applied.

A model can certainly identify the riskiest assets and those with an unacceptable level of risk associated with ownership and operation. However, it is also possible for a company to over-resource a problem while other threats go unchecked if a complete view of risk is not taken. A broad-spectrum risk approach provides a decision-making tool that allows a company to achieve desired levels of efficiency.

With its risk approach, Access’ goal is to reduce risk measurably in each of its operating areas. This will be the basis for an eventual prescriptive approach to asset management in some areas of operations. A company could use reliable risk estimates at set levels to justify increasing or decreasing chemical treatment, requiring a construction caliper during commissioning or adding more sampling locations to a pipeline. A broad-spectrum risk model that uses multiple datasets puts a powerful spotlight on data.

The data evaluation module prioritizes data needs while providing context for reviewing risk assessment results. It is important to understand what inputs are driving estimated failure rates and consequences before business decisions, based on the results, are made.

The majority of data used in Access’ risk model was captured and stored prior to the company beginning a risk assessment program. Risk assessment integrates each data set that previously may have only been used by two or fewer business units. This maximizes the potential of data across the company. This risk approach fosters communication between all of the data stakeholders and acts as a force multiplier by giving valuable information back to the stakeholders.

Acknowledgement: This article is based on a presentation at the 26th International Pipeline Pigging and Integrity Management Conference sponsored by Clarion Technical Conferences in Houston, Feb.10-13 2014.

Comments