March 2014, Vol. 241 No. 3

Features

Developments Toward Unified Pipeline Risk Assessment Approach

A certain amount of standardization in any process can be beneficial to stakeholders. In the case of pipeline risk assessment, standardization establishes process acceptability. This leads to consistent and fair regulatory oversight as well as minimum levels of analysis rigor.

On the other hand, too much standardization – a prescriptive approach – almost always adds inefficiency to any process. A mandated “one size fits all” risk assessment approach requires substantial overhead related to the establishment and documentation of the approach and then significant training for users and auditors. These costs are not the only downside. Innovation and creativity is stifled and, almost always, too much is done for some issues and not enough for others.

To strike the optimum balance, a set of essential elements is suggested. This establishes the minimum ingredients for acceptable pipeline risk assessment. Methodologies, additional ingredients and all details are left to the designer of the risk assessment — often the pipeline operator’s subject matter experts (SME). Setting essential elements ensures the benefits of standardization without loss of efficiency.

A Challenging Topic

Risk assessment, in particular, pipeline risk assessment, is a specialized field. Yet, under newer regulations and industry standards, most pipeline operators are being asked or directed to engage in this somewhat esoteric practice. Technically, they are to practice formal “risk management,” which is not exactly “risk assessment,” but the former does not occur without the latter.

Risk management is not a new concept — most regulations and standards have always implied a level of risk management. This is done via language that basically states: “perform sufficient mitigation so that failure does not occur or consequences are minimized” for certain risk aspects in which mitigation practice is not definitively prescribed. What is newer is the insistence by regulators for formal risk assessment leading to formalized processes to manage the measured risks. The days of decision-making via informal discussions among gathered managers and technical staff are waning.

Current Practice

Formal risk assessments of pipelines, using techniques similar to those employed in the nuclear, aerospace and chemical industries, have been used by some pipeline operators and in some regulatory environments for many years. But this practice has by no means become widespread. More common is the use of scoring or indexing systems among pipeline operators responding to a desire or mandate for more formal decision-support. These risk assessment techniques emerged as decision-makers sought more structure and consistency over the approach by which informal discussions were held to gain consensus, regarding the best risk management options.

Background searches show the scoring systems becoming more commonplace in the 1980s (e.g., cast iron replacement programs) and 1990s. With the advent of U.S. IMP regulations and related standards in the 2000s and subsequent regulations in other countries, many operators adopted the same techniques to comply with the new regulations.

So, is pipeline risk assessment, as it is practiced by many operators, sufficient for formal integrity management programs (IMP)? Not according to some regulators. As a result of increased scrutiny brought about by IMP in the federal regulators’ notices in January 2011, followed by public meetings in June 2011, showcased regulators’ increasing skepticism, regarding how pipeline operators are measuring risks.

Regulators’ recent criticisms are not unjustified. There is great disparity in approaches and level of rigor applied to risk assessment by pipeline operators. This is largely due to the absence of complete standards or guidelines covering this complex topic. The disparity leads to inconsistent and problematic oversight by regulatory agencies. Without some standardization or at least consistency of understanding, regulators cannot readily determine where deficiencies may lie.

The simplicity offered by relative or scoring type risk assessment models has made their use widespread. However, most of the early models will indeed require modifications in order to keep up with the new demands. Many of the risk assessments in use today were not designed for many of the applications now envisioned by regulatory IMP or other uses of risk assessment that are becoming commonplace.

Appropriate Level Of Standardization

Properly crafted, a listing of essential ingredients in a risk assessment would introduce a beneficial amount of standardization without becoming prescriptive. Specifying that all risk assessments contain, at a minimum, this short list of essential ingredients ensures that regulators and the regulated are on the same page. For example, a proposed list of essential elements is as follows:

This guideline sets forth the essential elements for a pipeline risk assessment. Using these elements ensures that meaningful risk estimates are produced. Furthermore, adoption of these minimum elements facilitates efficient and consistent regulatory oversight and helps manage expectations of all stakeholders.

The essential elements are intentionally comprised of a very short list, providing a foundation from which to build a comprehensive (or modify an existing) risk assessment program. Application of these elements is easy and intuitive. It supplements existing industry guidance documents on risk assessment and, therefore, does not repeat the important issues addressed there.

Measurements in verifiable units: The risk assessment must include a definition of “failure” and produce verifiable estimates of failure potential. Therefore, the risk assessment must produce a measure of probability of failure (PoF) and a measure of potential consequence.

Both must be expressed in verifiable and commonly used measurement units, free from intermediate schemes (such as scoring or point assignments). Failure probability or frequency must capture effects of length and time, leading to risk estimates such as “leaks per mile per year” or “costs/km-year,” etc.

Probability of failure based on basic engineering principles: All plausible failure mechanisms must be included in the assessment of PoF. Every failure mechanism must be properly measured by independently measuring the following three elements:

Exposure (attack) – The unmitigated aggressiveness of the force or process that may precipitate failure. This includes external forces, corrosion, cracking, human error and all other failure mechanisms. Example measurement units are “events per mile-year” or “mils per year (mpy) metal loss.”

Mitigation (defense) – The effectiveness of every mitigation measure designed to block or reduce an exposure. The benefit from each independent mitigation measure, coupled with the combined effect of all mitigations, is to be estimated. There are numerous common mitigations including depth of cover, patrol, coatings, inhibitors, training, and procedures, to name but a few.

Resistance (survivability) – The ability of the system to withstand forces that are not fully mitigated.

Assessment of the first two, exposure and mitigation, produces the probability of “damage without failure,” while all three produce an estimate of “damage resulting in failure.”

For each time-dependent failure mechanism, a theoretical remaining life estimate must be produced and expressed as a function of time.

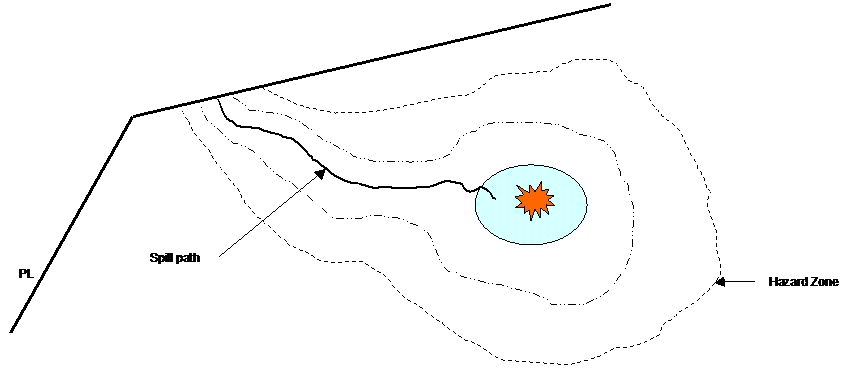

Fully characterized consequence of failure: The risk assessment must identify and acknowledge the full range of possible consequence scenarios associated with failure, including “most probable” and “worst-case” scenarios.

Risk profiles: The risk assessment must produce a continuous profile of changing risks along the entire pipeline recognizing the changing characteristics of the pipeline itself as well as its surroundings. The risk assessment must be performed at all points along the pipeline.

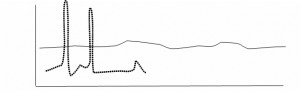

Exhibit 2: Comparing two pipeline risk profiles; risk (vertical axis) vs. length (horizontal axis).

Full integration of pipeline knowledge: The assessment must include complete, appropriate, and transparent use of all available information. Appropriateness is evident when the risk assessment uses all information in substantially the same way that an SME uses information to improve his understanding of risk.

Sufficient granularity: For analysis purposes, the risk assessment must divide the pipeline into segments in which risks are unchanging (i.e. all risk variables are essentially unchanging within each segment). Due to factors such as hydraulic profile and varying natural environments, most pipelines will necessitate the identification of at least five to 10 segments per mile with some pipelines requiring hundreds per mile. Compromises involving the use of averages or extremes (i.e. maximums, minimums, weighted averages, etc.) to characterize a segment can significantly weaken the analyses and are to be avoided.

Management of bias: The risk assessment must state the level of conservatism employed in all of its components — inputs, defaults (applied in the absence of information), algorithms and results. The assessment must be free of inappropriate bias that tends to force incorrect conclusions for some segments. For example, the use of weightings based on historical failure frequencies will almost always misrepresent lower frequency, albeit, important threats.

Proper aggregation of risk elements: A proper process for aggregation of the risks from multiple pipeline segments must be included. For a variety of purposes, summarization of the risks (or risk components, such as PoF) presented by multiple segments is desirable (e.g. “from trap to trap”). Such summaries must avoid simple statistics (i.e. averages, maximums, etc.) or weighted statistics (length-weighted averages, etc.) that may mask the real risks presented by the collection of segments. Use of such summarization strategies often leads to incorrect conclusions and are to be avoided.

Perhaps all can agree that, regardless of specifics of modeling, a list of essential elements such as these must be a part of an analysis. Each one is critical in properly measuring and understanding risk. The essential elements recommended here are actually very simple concepts and easy to implement. As a side benefit, potential modeling issues surrounding aspects such as “threat interaction” and “proper aggregation of risk results” largely disappear when these elements are present.

When all parties agree on what is essential and everyone measures those essential things in some fashion, then everyone is “speaking the same language.” Creativity is not stifled — flexibility and customization are still encouraged. A limited amount of standardization in measuring risk is therefore appropriate and useful to all stakeholders. Expectations are managed, audits run smoother, info sharing is improved, and risk management becomes more efficient.

Implementing A Risk Assessment

The overall steps for assessment of pipeline risk under a modern risk assessment methodology, and consistent with this guideline, may be summarized as follows:

• Define “failure” and level of conservatism for the risk assessment

• Exposure: Estimate exposures from each threat at all points along the pipeline

• Mitigation: Estimate combined effect of all mitigations at all points along the pipeline

• Resistance: Estimate the amount of resistance at all points along the pipeline

• Dynamically segment the pipeline based on collected data and estimates

• Probability of failure (PoF): Calculate PoF from each threat for each segment

• Consequence of failure (CoF): Identify representative consequence scenarios for each segment

• Risk: Produce risk values for each segment, perhaps in units of “expected loss”’ (EL) such as “dollars/mile-year”

The process implied by this guideline is intuitive and comprehensive. Equations used can and should be simple and grounded in sound engineering principles. For instance:

Time-independent failure mechanisms include third party; geo-hazards; human error; sabotage; theft

They can each be efficiently modeled as: (failure rate) = [unmitigated event frequency] x (1 – [mitigation effectiveness]) x (1 – [resistance])

For example,

0.5 excavations/mile-year x (1- 90% mitigation) x (1-80% resistance)

= 0.01 failures/km-year

= 1% probability of failure per km-year

Time-dependent failure mechanisms include corrosion, fatigue and environmentally assisted cracking. They can each be efficiently modeled as:

(failure rate) = f (time-to-failure, TTF) Where TTF = (available pipe wall) / [(wall loss rate) x (1 – [mitigation effectiveness])

For example, an effective remaining wall thickness of 10 mm exposed to a potential corrosion rate of 0.5 mmpy, but which is mitigated 97%, shows:

TTF = 10mm / (0.5 mmpy x (1-97%mitigation) = 167 years

PoF = <1% probability="" of="" failure="" per="" year="" in="" these="" equations,="" a="" probability="" of="" damage="" emerges="" independent="" of="" probability="" of="" failure.="" this="" is="" a="" useful="" distinction="" and="" necessary="" to="" the="" understanding="" of="" pof.="" the="" modeled="" role="" of="" integrity="" assessments,="" including="" ili,="" pressure="" testing,="" and="" direct="" assessments="" (da),="" should="" be="" direct="" and="" clear,="" as="" it="" is="" in="" the="" real="" world:="" •="" detection="" and="" assessment="" of="" locations="" of="" reduced="" resistance="" •="" reduction="" in="" uncertainty="" •="" detection="" of="" failed="" mitigation="" •="" re-evaluation="" of="" estimated="" exposure="" levels="" •="" ‘re-setting="" the="" clock’="" for="" previously="" estimated="" assumed="" degradation="" rates="" adherence="" to="" this="" guideline="" produces="" risk="" values="" with="" exceptional="" usefulness="" to="" all="" stakeholders.="" for="" instance,="" consider="" the="" following="" sample="" valuations:="" the="" total="" consequences="" per="" failure="" at="" a="" location="" on="" a="" pipeline="" is="" estimated="" at="" ~$166k.="" this="" is="" the="" probability-adjusted="" expected="" loss="" from="" all="" pipeline="" failure="" scenarios="" at="" this="" location.="" the="" annual="" expected="" loss="" is="" obtained="" by="" multiplying="" this="" value="" by="" the="" annual="" failure="" rate.="" if="" that="" value="" is="" 0.00033="" failures="" per="" mile-year="" and="" this="" “location”="" on="" the="" pipeline="" represents="" one="" mile,="" then="" the="" expected="" loss="" is="" ($166,000="" per="" failure="" per="" year)="" x="" (0.00033="" failures="" per="" mile-year)="$55" per="" year.="" therefore,="" over="" long="" periods,="" the="" cost="" of="" pipeline="" failures="" for="" this="" one="" mile="" of="" pipe="" is="" expected="" to="" average="" about="" $55="" per="" year.="">From Risk Assessment To Risk Management

The risk estimates generated in this way are extremely useful to decision-makers. Such estimates can become part of the budget setting and valuation processes. In this example, the company first uses these values to compare to, among other benchmarks, a national average for all transmission pipelines of $650/mile-year .

Understanding how each pipeline segment contributes to the overall risk sets the stage for risk management. For risk management at specific locations, cost/benefits of various risk mitigation measures can be compared by running “what if” scenarios using anticipated mitigation effectiveness arising from the proposed actions. These risk estimates can also be used to establish “safe enough” limits by following pre-determined risk acceptability criteria such as those proposed in CSA Z662 Annex 0 or the widely practiced concept of ALARP.

As the desire for more robust pipeline risk management grows, so does the need for superior risk assessment. A formal risk assessment provides the structure to increase understanding, reduce subjectivity, and ensure that important considerations are not overlooked. Associated decision-making is, therefore, more consistent and reliable when formal techniques are used.

Author:

W. Kent Muhlbauer is principal of WKM, LLC, a consultancy specializing in pipeline risk management. With over 30 years in the pipeline industry, Muhlbauer is an advisor to pipeline operators, regulators, and academia worldwide. WKMC is currently partnered with DNV GL in efforts to improve all pipeline risk management practice.

Comments