November 2013, Vol. 240 No. 11

Features

Leveraging GIS For Pipeline Integrity In High-Consequence Areas

As oil and gas pipeline companies struggle to do more with less, the industry must rely on technology to squeak out efficiencies. Geographic information systems (GIS) have been a part of pipeline operations for decades. However, when outdated methods and resource-intensive data collection processes are used in an integrity management program, the results can become overly expensive, highly inaccurate or even non-compliant.

Pipeline companies must act in accordance with federal (PHMSA) and state regulators to ensure their integrity management programs fulfill regulatory obligations. These rules and regulations require that an operator use all available information about its pipeline system – and the areas surrounding the pipelines – to assess potential risks and take appropriate action to mitigate those identified risks. Because of these challenges, data acquisition and analysis are often the most critical and challenging parts of a strong integrity management program.

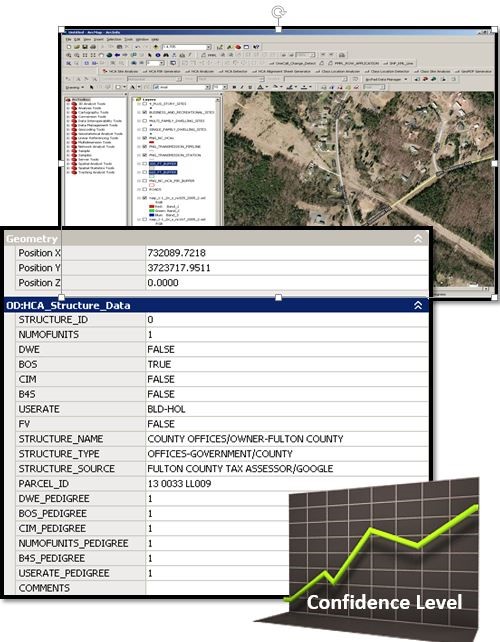

Data plays an integral part in everything a GIS does. We have all heard the data mantra, “garbage in, garbage out,” and this couldn’t be more relevant when trying to satisfy integrity management regulations. If the pipeline alignment is inaccurate, if the underlying base map is shifted, if identified sites are out of date, then class locations and high-consequence areas (HCAs) will likely be calculated incorrectly, and the integrity management program may be focused in the wrong areas.

The primary theme of this article is not about PHMSA rules or HCA calculations; rather, it describes a sound and efficient mythology to gather and process HCA-required data – or virtually any other GIS data acquisition and maintenance needed. A four-step approach for gathering, validating and documenting the GIS data is included that meets PHMSA expectations. This process helps to ensure organizations avoid over- and under-identifying structures and, consequently, misidentifying potential HCAs.

For gas transmission integrity management programs, class and HCA analysis relies heavily on structures and identified sites. Identified sites will serve as examples. Operators are expected to make “reasonable” efforts to search out identified sites (OPS Advisory Bulletin ADB-03-03, July 17, 2003).

The regulations do not require an exhaustive search, only a “good faith” effort, including consultation with public officials. Operators are expected to continually monitor population or usage changes, and update integrity practices accordingly at least once each year.

The Four-Step Approach

1. Follow a repeatable and verifiable process: Repeatability is perhaps the leading component to efficient data collection. Once a process is repeatable, it can be measured; once measured, performance can be evaluated, streamlined and efficiencies realized which, in turn, lowers costs. Standard operating practices yield predictable, quality results. Repeatability means different staff can perform the same tasks and similar results can be expected. Conversely, ad-hoc processes lead to chaotic results and wildly variable quality.

2. Collect current, comprehensive and accurate data from authoritative sources: There are several key terms in this step that may make or break your integrity management program:

• Current – The data source must reflect the most up-to-date information obtainable.

• Comprehensive – All fields have values, all records are complete.

• Accurate – This means faithfully representing true values or readings that are free from error.

• Authoritative Source – A federal, state, county or local government repository of permits, licenses or registrations. These agencies should be recognized as the definitive authority for each particular data type, continually update these repositories with current information, and publish frequently and regularly. As part of this step, each data source should be assessed for these objective qualities. This assessment leads to a confidence score. The confidence scoring system may be something like 0 to 10, “A” through “F,” pass/fail, or whatever makes since to the organization. If the data is not from an authoritative source, if it is not current, if it is incomplete, then it can still be used, but it must be given a low confidence score.

3. Prioritize the field validation effort on low confidence data: Not all sources acquired in the previous step are going to provide the most desirable levels of current, comprehensive or accurate data. Yet, if it is the best information available, it must be used. In the end, one must ask, “Is there confidence in this data?” When confidence in the data is low, field verification will prove invaluable. This improves efficiency for field technicians as they can focus on comprehensively verifying lower confidence data, while data with the highest confidence-levels might receive occasional spot-check verifications.

4. Document your process and sources to meet audit requirements: To pass a PHMSA audit, documentation will be critical. PHMSA will not be looking just for results, but complete documentation of the methodologies used and the technologies employed for compliance.

If a process is repeatable and verifiable (Step 1), it needs to be documented. If not documented, time will be wasted trying to recreate a process each time it is needed. The data sources and data assessment (confidence) processes (Step 2) must be fully documented to satisfy audit requirements. It is equally important to document the data that was not used and why. The field verification (Step 3) results – both verified as correct and corrections – must be documented.

By following these four simple steps, PHMSA audits will run much smoother, but more importantly, HCAs and class locations will be identified with significantly better spatial accuracy. This will lead to operating safer, aligning better with regulatory expectations, improving the overall efficiency of the integrity management program and delivering substantially more confidence.

Author: Jim Pugh joined irth Solutions in 2013 and has more than 25 years of utility mapping and 20 years of GIS project management experience. He holds an associate’s degree from Valencia College and was certified a Geographic Information Systems Professional (GISP) in 2005.

Comments